Alberta Oil & Gas Payroll Is a Software Problem

Most “payroll software” assumes the data entry is the hard part. You punch in hours, the system multiplies by a rate, deducts the right things, and hands you a stub. For a salaried office that works fine. For an Alberta drilling rig it doesn’t, and the reason isn’t the data entry. It’s the rules.

Pre-spud roles paid by the day. Crew paid by the hour. Provincial overtime caps that differ between Alberta and B.C. Allowances that fire on some line items but not others, depending on shift type and rig. Statutory holiday pay that depends on whether the worker put in five of the nine days before the holiday. Retro adjustments that have to land in current-period earnings without breaking the current-period overtime calculation. The Sage 50 GL waiting at the end of the line, expecting a clean import.

There isn’t a stock payroll module that knows all of that. There isn’t a Power Platform form that captures it either. Power Platform handles the data entry (the timesheets, the employee roster, the rig assignments, the rate tables) beautifully. Where it falls down is the calculation step. That is a process, not a form, and it belongs in code.

The complexity is irreducible

It is tempting, the first time you look at a drilling payroll, to assume some piece of it can be simplified away. After several runs of writing one, here is what survives.

Mixed pay models inside a single day. A worker can be a manager (paid by the day) for part of a shift and an hourly crew member for the rest. The day-rate fraction has to be computed against the manager hours that day, the hourly portion has to flow into the regular-and-overtime cap calculation, and the two halves have to land on the same row in the export so accounting can reconcile.

Cross-province weekly caps. Alberta and B.C. have different weekly overtime caps. A worker pulling a Sunday in B.C. and a Monday in Alberta inside the same payroll week needs the weekly cap pro-rated by jurisdiction, not by calendar week. The cap-resolution logic runs per day, not once per week.

5-of-9 stat eligibility. Alberta’s general holiday rules require an employee to have worked five of the nine days before the holiday to qualify. That is a per-employee, per-holiday, rolling lookback over a sliding nine-day window, counted against actual worked days, with weekend handling that differs from the regular overtime week alignment.

Retro pay that doesn’t double-count. When prior-period adjustments roll into the current run, the retro hours have to add to taxable earnings now, but must not push current-period regular hours over the daily or weekly overtime cap. They get tagged on the way in, held out of the cap accumulators, and re-aggregated into the totals at the end.

Allowance deduplication. Mud pay, subsistence, camp allowances. The same allowance can fire on multiple timesheet lines in one day because the worker recorded their day in shifts. The policy says it pays once per day, but only when the role and the shift type qualify. The dedup is a small piece of code; getting it wrong is a payroll error every period until somebody catches it.

CAODC 1600-hour averaging. Drilling employers operating under the CAODC averaging permit accumulate hours toward an annual rolling threshold; what counts as overtime depends on whether the worker has crossed it. The engine has to track the running total per employee across runs, not just within a run.

None of this is the data entry. All of it is what happens to the data after it lands.

Treat it as a pipeline

Once you stop treating payroll as a form, the shape gets cleaner. It is an ETL pipeline with a stateful rules layer in the middle.

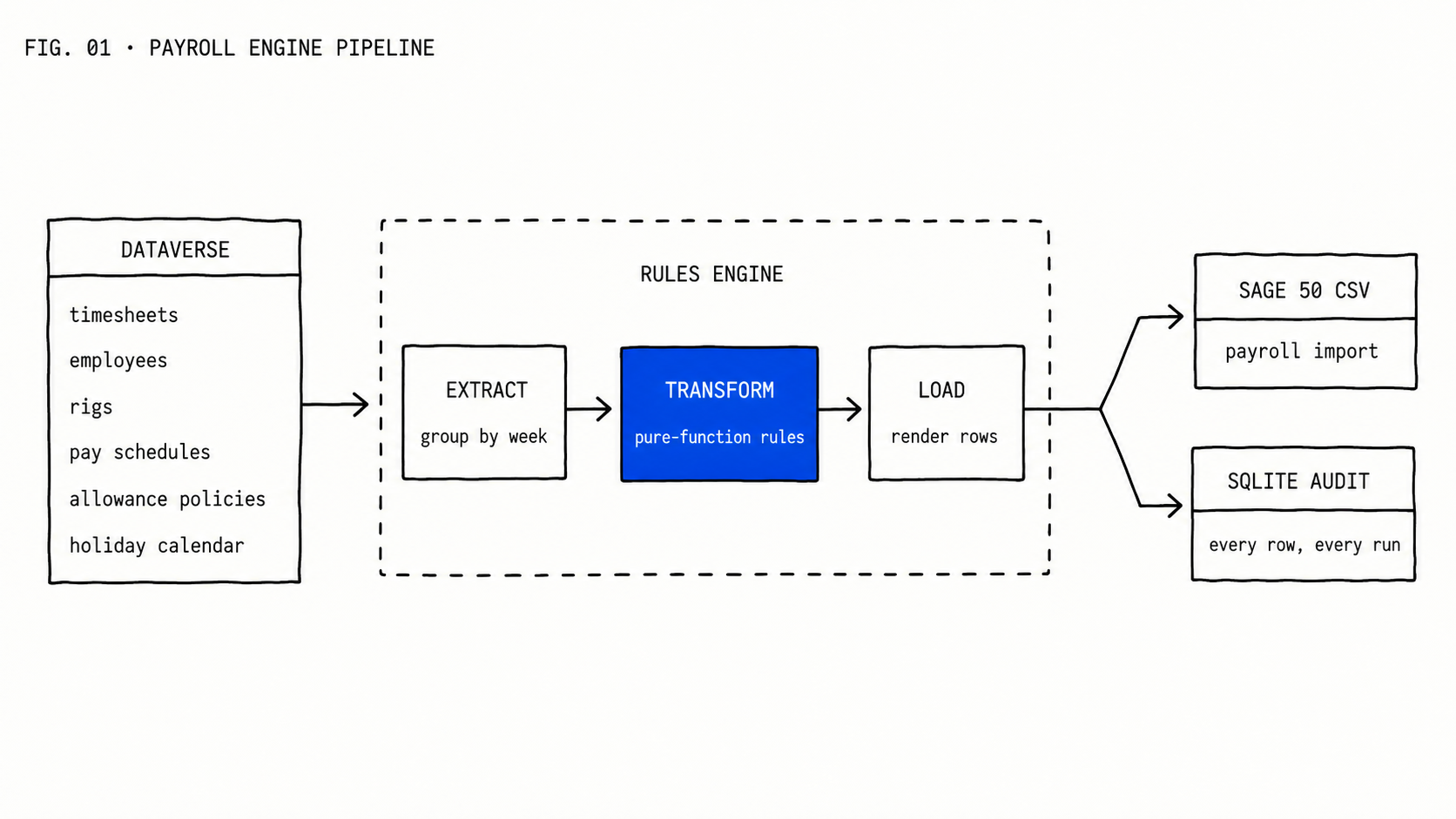

Extract. Pull timesheet lines, employee records, rig assignments, pay schedules, allowance policies, and the holiday calendar from Dataverse. Group the timesheet lines by (employee, week, jurisdiction). Categorize each line as payable, retro, or context (already paid, kept for lookback). Sort within each day by earning priority so the order is deterministic.

Transform. Iterate week by week, day by day, role-group by role-group. Resolve the cap for the day, resolve the pay schedule for the role, decide whether the worker is on manager or hourly mode for this slice of the day, run the math, write the result into a daily row with every derived value preserved. The math itself lives in pure functions: no I/O, no database, no state beyond the inputs and outputs. Stat eligibility, allowance dedup, retro isolation, ADW (average daily wage) accumulation, CAODC running totals all live as functions you can unit-test in isolation.

Load. Render the daily rows as a CSV in the format Sage 50 expects. Persist every row, plus the run’s metadata, into a local SQLite store with a unique run ID. That store is the audit log: months later, when somebody asks why row 47 paid what it paid, you can answer.

The boundary between Dataverse and the engine is the only architectural decision that matters. Dataverse owns the facts: who worked, where, when, on what rig, under what schedule. The engine owns the rules: how those facts turn into dollars. Sage owns the GL. Each layer is good at exactly one thing.

Why Python (and why C# would work too)

The engine we run is in Python. Pydantic for the domain models, pandas for the tabular middle, pytest for the rule tests, Streamlit for the operations UI that lets a payroll clerk inspect a run before exporting it. None of that is load-bearing. The same architecture works in C#/.NET, or in any language with decent dataclasses and a test runner. The choice is about the team’s ecosystem and what the operations side already runs, not about capability.

What does matter is keeping the rules layer outside Dataverse. We see clients try to live inside Power Platform end-to-end, with Power Automate flows or large Power Fx expressions doing the math. It works for two pay periods and breaks on the third. Flows are not where you want a sliding 9-day lookback or a stateful manager-status flip mid-day. Once the math is non-trivial, code is cheaper than configuration.

What you get from doing it this way

Determinism. Same inputs, same outputs, every time. No “the spreadsheet looked weird this period.”

Replayability. Re-run any past pay period and reconcile against the historical export. When a number disagrees, the diff is mechanical.

Tested rules. Every cap, every allowance, every retro-pay isolation is a unit test. Adding a new rule lands as a new test, then a new function. The cost of changing the rules drops by an order of magnitude.

Audit trail. Every row written to local SQLite with the run ID, the timestamp, the input source, and every derived field preserved. If a regulator or auditor asks why a row paid what it paid, you can show them the inputs, the path through the rules, and the output. Spreadsheets cannot do this.

Operational separation. Payroll clerks review runs in a UI; they don’t own the formulas. The formulas live in code, in version control, with reviewable commits. Turnover stops being a continuity risk.

Where this fits as a service

This is the kind of work we mean when we say custom software development: the part that doesn’t fit a low-code platform because the value is in the rules, not the forms. Power Platform plus a custom engine sitting beside it is a recurring shape for us. Inspections and audits stay on the platform. Complex business processes (payroll, royalty calculations, well-completion accounting, anything with rolling state and provincial regulatory rules) go in code.

We have built one of these for a drilling client running across Alberta and B.C., reading timesheets that crews enter through the same Dataverse forms their inspections live on, exporting to the Sage instance their accounting team already uses. The rules vary by client. The architecture doesn’t.

If your finance team is reconciling drilling payroll out of three spreadsheets and a Power Automate flow, and one person quietly understands the formulas, that’s the signal. The fix isn’t a faster spreadsheet. It is a rules engine that you can audit, test, and hand to the next person who needs to maintain it.